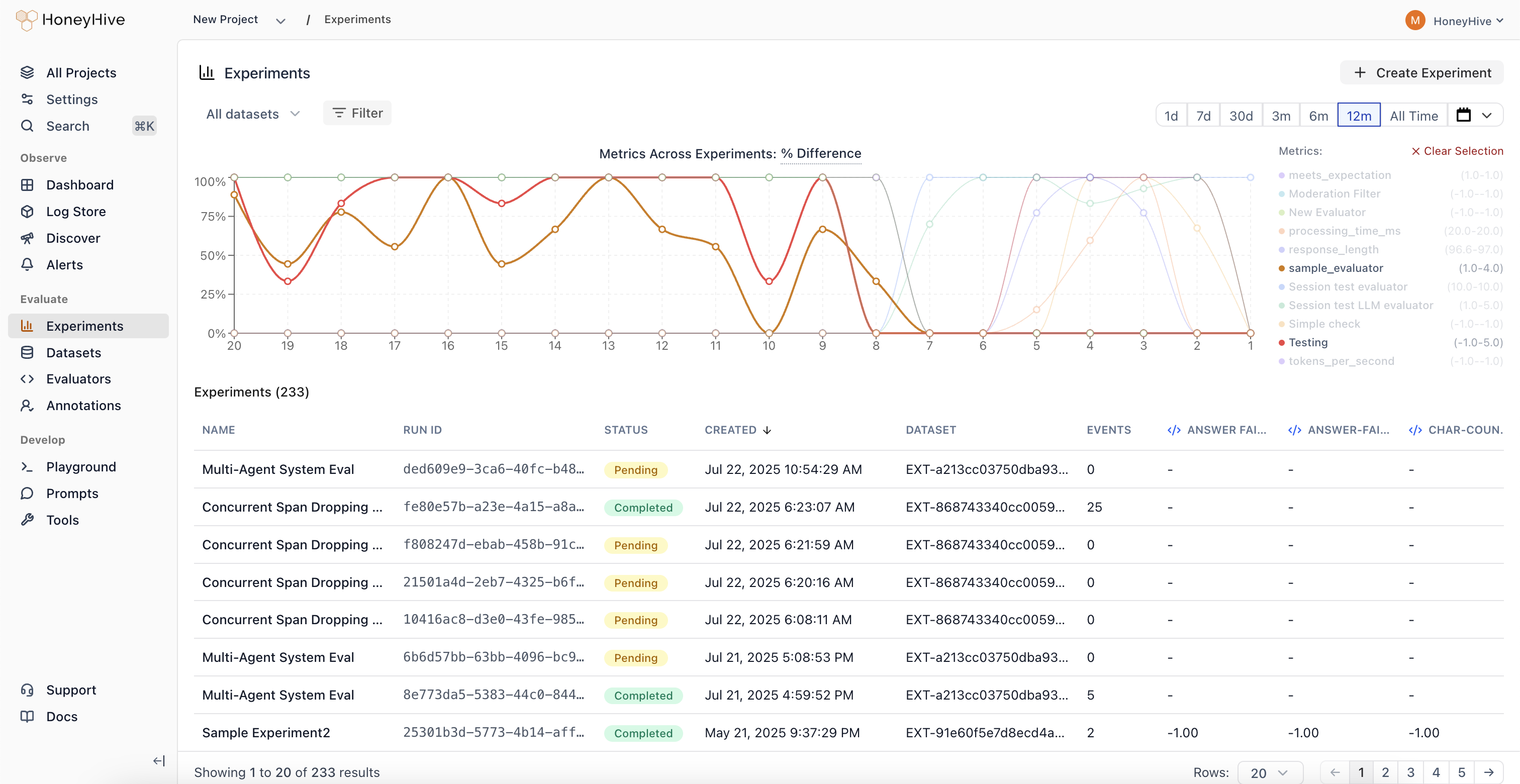

Experiments in HoneyHive help you systematically test and improve your AI applications. Whether you’re iterating on prompts, comparing models, or optimizing your RAG pipeline, experiments provide a structured way to measure improvements and ensure reliability.Documentation Index

Fetch the complete documentation index at: https://docs.honeyhive.ai/llms.txt

Use this file to discover all available pages before exploring further.

Run Your First Experiment

New to experiments? Follow our hands-on tutorial to run your first experiment in 10 minutes.

Core Concepts

An experiment consists of three parts:| Component | What it is | Example |

|---|---|---|

| Function | The code you want to evaluate | A prompt, RAG pipeline, or agent |

| Dataset | Test cases with inputs and expected outputs | Customer queries with correct intents |

| Evaluators | Functions that score outputs | Accuracy check, LLM-as-judge |

Why Use Experiments?

- Iterate with confidence - Test prompt variations, model configurations, and architectural changes against consistent metrics

- Track improvements - Monitor how changes affect key metrics over time

- Automate quality checks - Run experiments in CI/CD pipelines to catch issues before deployment

- Compare approaches - Evaluate different models, retrieval methods, or chunking strategies side-by-side

- Ensure reliability - Catch regressions by testing across diverse scenarios before deploying

How It Works

When you callevaluate():

- Run - Your function executes on each datapoint (with automatic tracing)

- Score - Evaluators measure each output against ground truth

- Aggregate - HoneyHive computes metrics (average, min, max)

- View - Results appear in the dashboard for analysis

Trace Linking

Every execution creates a traced session with metadata that links it to:run_id- Groups all traces from a single experiment run together. By default,evaluate()auto-generates a UUID for this, but you can pass a customrun_idto correlate results with CI pipelines or other external systemsdatapoint_id- Identifies which test case produced each trace

- Same datapoint, different runs - Compare how prompt v1 vs v2 handled the same input

- Aggregate metrics - See average accuracy across all test cases in a run

- Regression detection - Identify which specific inputs degraded between versions

Auto-Instrumenting LLM Providers

Use theinstrumentors parameter to automatically trace LLM calls from third-party libraries (OpenAI, Anthropic, etc.) during experiments. Each zero-argument factory or constructor is called per datapoint so every datapoint gets its own instrumentor instance for proper trace isolation.

Async Function Support

evaluate() accepts both synchronous and async functions. Async functions are automatically detected and executed with asyncio.run() inside worker threads, with no extra configuration needed.

Parallel Execution

Control concurrency withmax_workers (default: 10). Datapoints run on a worker thread pool, with up to max_workers executing at the same time. Each datapoint still gets its own isolated tracer instance.

| Setting | Use Case |

|---|---|

max_workers=1 | Sequential execution (debugging) |

max_workers=5 | Conservative (strict API rate limits) |

max_workers=10 | Balanced (default) |

max_workers=20 | Aggressive (fast, watch rate limits) |

Controlling Results Output

By default,evaluate() prints a formatted results table to the console after each run. Disable this with print_results=False:

Git Context

When you runevaluate() from a Git repository, the SDK automatically captures Git metadata on each experiment run:

- Commit hash and branch name

- Author and remote URL

- Dirty status (whether there are uncommitted changes)

metadata.git on the experiment run in the dashboard, making it easy to trace any result back to the exact code that produced it. No configuration is needed - if git is available and you’re inside a repo, the context is collected automatically.

For deeper understanding of the framework design and evaluation philosophy, see Evaluation Framework.

Next Steps

Run Your First Experiment

Hands-on tutorial to get started in 10 minutes

Compare Runs

Identify improvements and regressions across versions

CI Regression Detection

Gate every pull request on evaluation metrics via GitHub Actions

Create Evaluators

Build code, LLM-as-judge, or human evaluators

Manage Datasets

Create and version test datasets in HoneyHive