LLM evaluators use large language models to evaluate the quality of AI-generated responses based on custom criteria. They’re ideal for qualitative evaluations like coherence, relevance, faithfulness, and tone.Documentation Index

Fetch the complete documentation index at: https://docs.honeyhive.ai/llms.txt

Use this file to discover all available pages before exploring further.

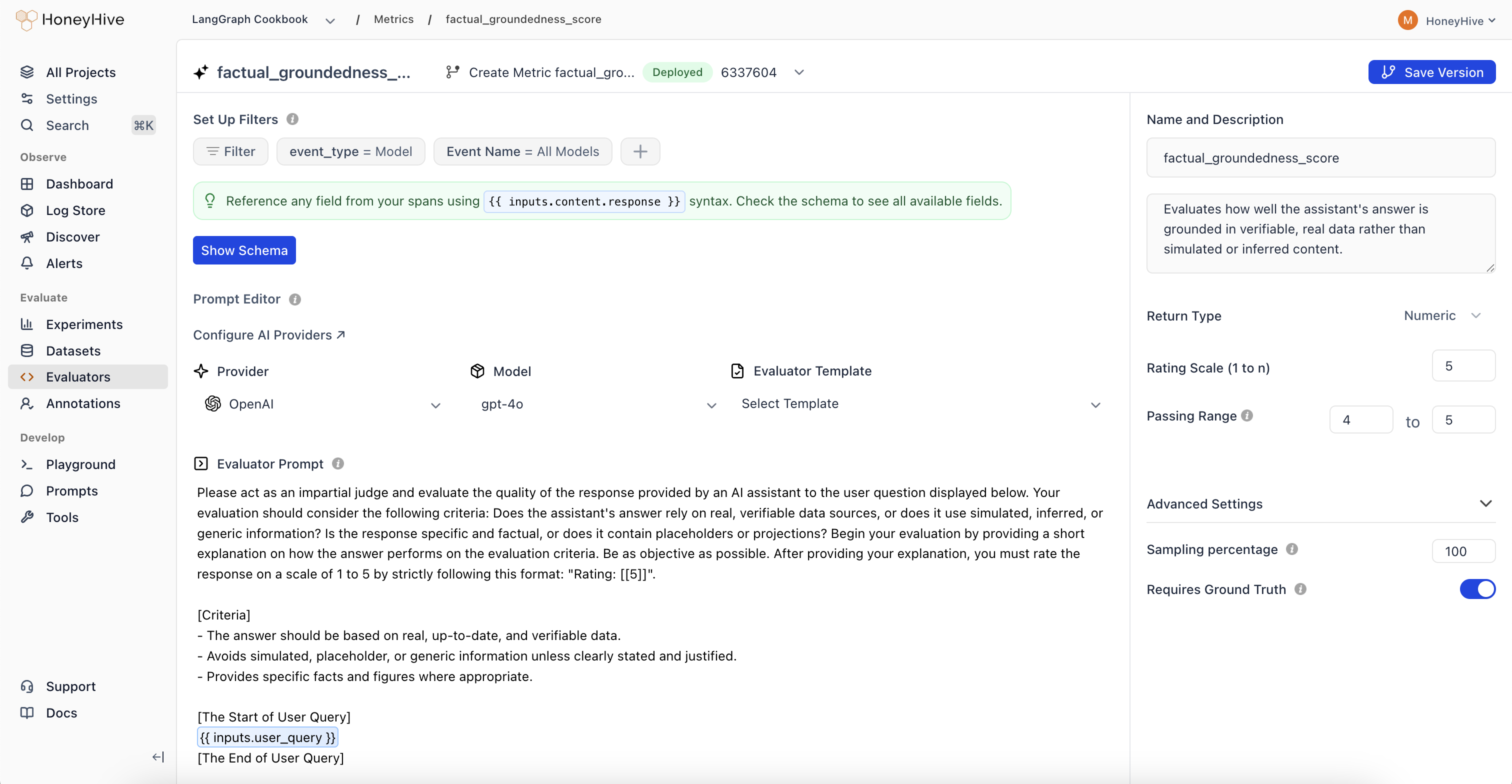

Creating an LLM Evaluator

- Navigate to the Evaluators tab in the HoneyHive console.

- Click

Add Evaluatorand selectLLM Evaluator.

LLM evaluators use your configured AI provider. Set up provider keys in Provider Keys to use models from OpenAI, Anthropic, or other providers.

Event Schema

LLM evaluators operate on event objects from your traces. Use{{ }} syntax to reference event properties in your prompt.

| Property | Description | Example |

|---|---|---|

event_type | Type of event: model, tool, chain, or session | {{ event_type }} |

event_name | Name of the event or session | {{ event_name }} |

inputs | Input data (prompt, query, context, etc.) | {{ inputs.question }} |

outputs | Output data (completion, response, etc.) | {{ outputs.content }} |

feedback | User feedback and ground truth | {{ feedback.ground_truth }} |

For detailed event schema documentation and tracing setup, see Configuring Tracing for Server-Side Evaluators.

Evaluation Prompt

Define your evaluation prompt using the{{ }} syntax to inject event data:

Looking for ready-made examples? Check out our LLM Evaluator Templates.

Configuration

Return Type

- Boolean: For true/false evaluations

- Numeric: For scores or ratings (e.g., 1-5)

- String: For categorical labels or text responses

Passing Range

Define the range of scores that indicate a passing evaluation. Useful for CI/CD pipelines and identifying failed test cases.Enabled

Toggle to run this evaluator on all traces that match your event filters.Sampling Percentage

Run your evaluator on a percentage of matching events to manage costs. New evaluators default to 10% sampling. Adjust based on event volume and cost budget - for example, set 25% to evaluate one in four matching events.Sampling applies to all traces that match your event filters. To evaluate only a subset of events, combine sampling with specific event filters.

Event Filters

Use Set Up Filters to specify which events trigger this evaluator. Filters are ANDed together - an event must match all filters to be evaluated.Preset Filters

Every evaluator includes two preset filters by default:- Event Type: Filter by

model,tool,chain, orsession - Event Name: Target a specific event name, or use “All” (e.g., “All Models”) to match any event of that type

Additional Filters

Click the + button to add filters on any event property. You can filter on any field available in your event schema, including nested properties using dot notation (e.g.,inputs.question, metadata.model, outputs.content).

Each filter consists of:

- Field: Any property from the event schema

- Operator: Depends on the field type (see below)

- Value: The value to compare against

| Field Type | Operators |

|---|---|

| String | is, is not, contains, not contains, exists, not exists |

| Number | is, is not, greater than, less than, exists, not exists |

| Boolean | is, exists, not exists |

| Datetime | is, is not, after, before, exists, not exists |

Next Steps

Python Evaluators

Create code-based evaluators for programmatic checks

Evaluator Templates

Ready-to-use LLM and Python evaluator templates

Run Experiments

Use evaluators in offline experiments

Human Annotation

Set up human review workflows

Manage as Code

Check evaluators into your repo and apply them with the CLI