Python evaluators let you write custom evaluation logic that runs on HoneyHive’s infrastructure. Use them for format validation, metric calculations, or any programmatic assessment of your AI outputs.Documentation Index

Fetch the complete documentation index at: https://docs.honeyhive.ai/llms.txt

Use this file to discover all available pages before exploring further.

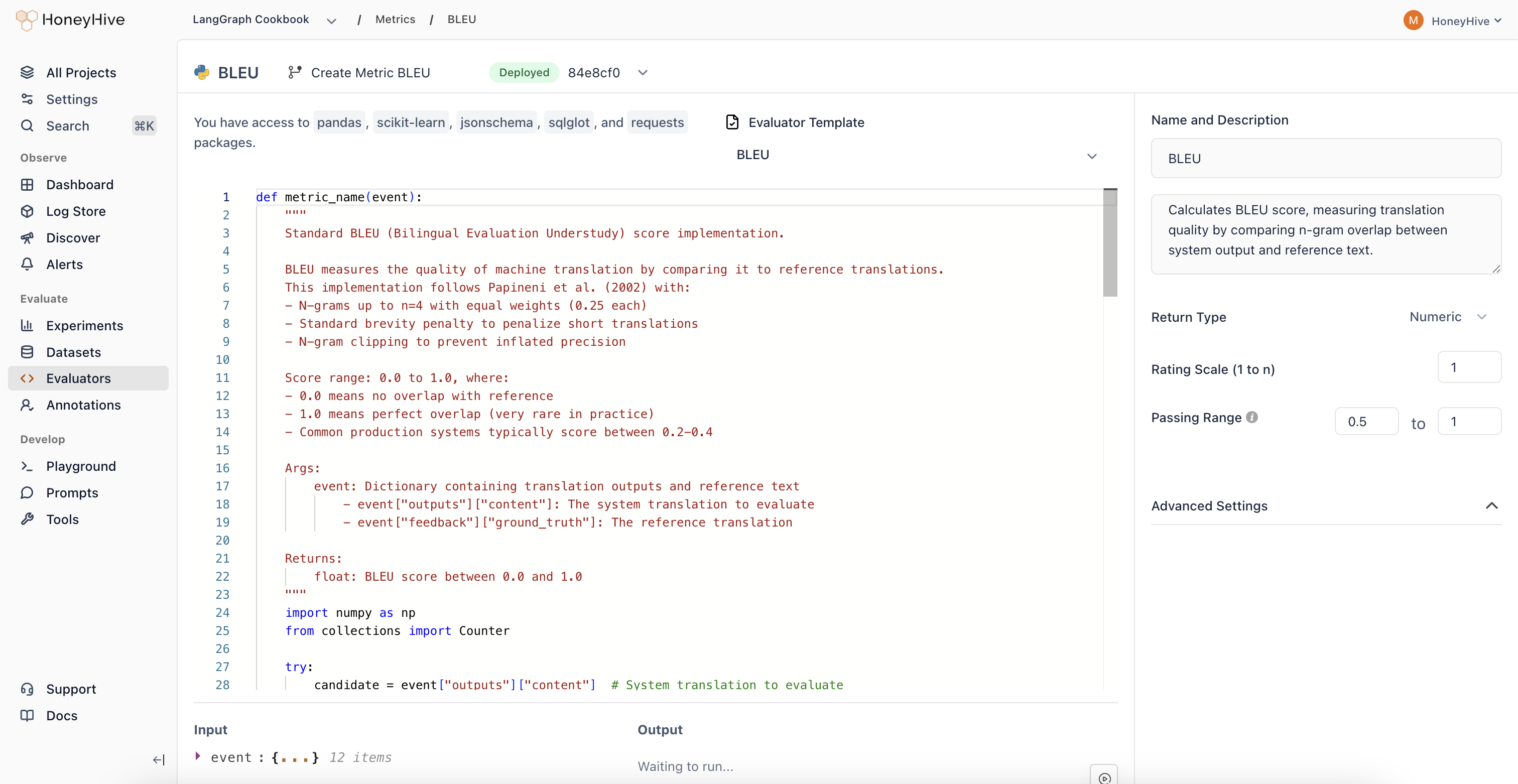

Creating a Python Evaluator

- Navigate to the Evaluators tab in the HoneyHive console.

- Click

Add Evaluatorand selectPython Evaluator.

Event Schema

Python evaluators operate on anevent object representing a span in your traces. The following fields are available as top-level variables in your evaluator code:

| Variable | Description |

|---|---|

event | The full event object (dict with all fields below) |

inputs | Input data for the event |

outputs | Output data from the event |

feedback | User feedback and ground truth |

metadata | Additional event metadata |

metrics | Scores from other evaluators that have already run on this event (e.g. metrics.get("relevance")) |

config | Configuration used for this event (model, hyperparameters, template) |

event_type | Type: model, tool, chain, or session |

event_name | Name of the specific event |

event_id | Unique identifier for this event |

session_id | Session this event belongs to |

project_id | Project this event belongs to |

source | Source of the event |

start_time | Event start timestamp (Unix ms) |

end_time | Event end timestamp (Unix ms) |

duration | Event duration in milliseconds |

error | Error message string if the event failed, otherwise None |

user_properties | Custom user properties attached to the event |

Evaluator Function

Define your evaluation logic in a Python function. The function must take no arguments and access event data through the top-level variables:result:

result, that value is used directly. Otherwise, HoneyHive calls the first callable it finds in your code. If you define helper functions, put them before your main evaluator function so the main one is found first.

Looking for ready-made examples? Check out our Python Evaluator Templates.

Available Packages

The following packages are available for import in your evaluator code:| Package | Use Case |

|---|---|

json | JSON parsing and serialization |

re | Regular expressions |

math, statistics | Numerical computations |

collections | Specialized data structures |

datetime | Date/time handling |

string, itertools, functools, operator | Standard library utilities |

pandas | DataFrames and data manipulation (in-memory only) |

numpy | Numerical arrays and math |

sklearn | Machine learning utilities (e.g. cosine similarity, metrics) |

jsonschema | JSON schema validation |

sqlglot | SQL parsing and validation |

Sandbox Restrictions

Python evaluators run in a sandboxed environment with these limits:- Code size: 4KB maximum

range()limit:range()is capped at 999 elements. For larger iterations, iterate over a list directly (e.g.outputs["content"].split()) which has no iteration limit.- No file I/O:

open()in write mode and package-level I/O functions (e.g.pd.read_csv,np.load) are blocked - No network access: HTTP requests and remote data fetching (e.g.

sklearn.datasets.fetch_*) are not available - Import restrictions: Only the packages listed above can be imported

Configuration

Event Filters

Filter which events this evaluator runs on using event type, event name, and additional property filters. See Event Filters for the full list of supported filter options and operators.Return Type

Boolean: For true/false evaluationsNumeric: For scores or ratings (configure the scale, e.g., 1-5)String: For categorical outputs

Passing Range

Define pass/fail criteria for your evaluator. Useful for CI builds and detecting failed test cases.Advanced Settings

Expand to configure:- Requires Ground Truth: Enable if your evaluator needs

feedback.ground_truth

Production Settings

After creating an evaluator, you can enable it for production traces from the Evaluators table:- Enabled: Toggle to run this evaluator on all traces that match your event filters

- Sampling %: When enabled, set a sampling percentage to control costs. The default is 10% (one in ten matching events)

Using with Experiments

When enabled, server-side evaluators automatically run on all traces that match your event filters, including experiment traces. When you runevaluate(), your enabled server-side evaluators score the results without any additional code.

Troubleshooting

| Symptom | Cause | Fix |

|---|---|---|

ImportError: Import of module 'X' is not allowed | Module not in the allowed list | Use only available packages |

PermissionError: file and network I/O is not allowed | Attempted file write or network call | Use in-memory operations only (e.g. df.to_dict() instead of df.to_csv("file.csv")) |

Metric execution timed out | Code exceeded the execution timeout | Optimize your logic or reduce data processing |

Code snippet exceeds maximum size | Code over 4KB | Simplify your evaluator or extract helper logic |

No function was defined and no 'result' was assigned | Missing return value | Either define a function or assign to result |

SyntaxError | Code has Python syntax errors | Check your code in a local Python environment first |

| Evaluator auto-disabled | 100+ failures within 1 hour | Fix the underlying error, then re-enable from the Evaluators table. See auto-disable details. |

Run Your First Experiment

Get started with experiments

Experiments Framework

Learn how experiments and evaluators work together

Manage as Code

Check evaluators into your repo and apply them with the CLI