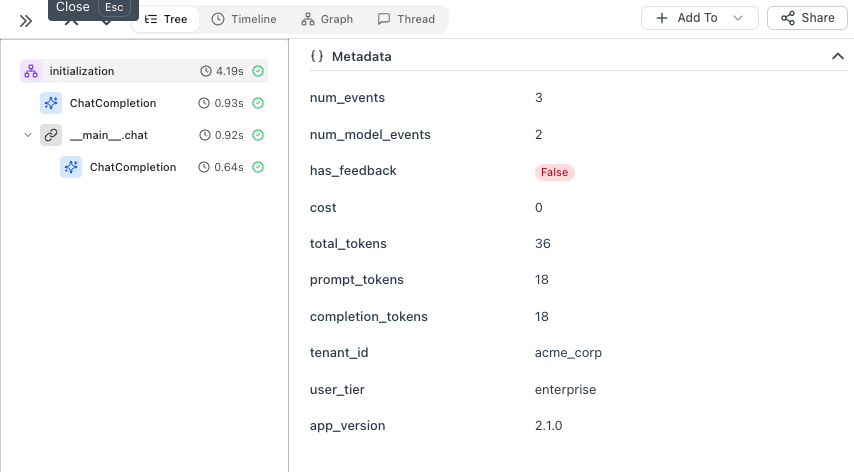

- Add session-level context (environment, app version)

- Add metadata directly to LLM spans

- Add metadata to parent spans for complex pipelines

Setup with Session Context

First, initialize the tracer and set session-level context that applies to ALL traces:

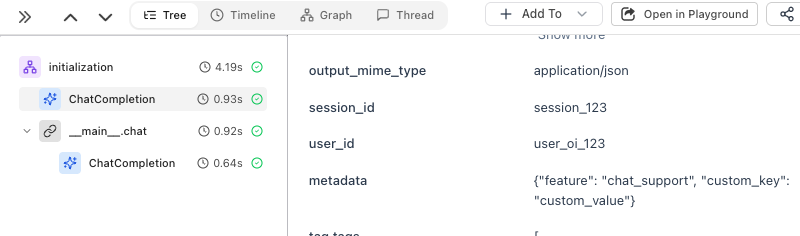

Option 1: Enrich LLM Spans Directly

The simplest way to add per-call metadata - useusing_attributes from OpenInference:

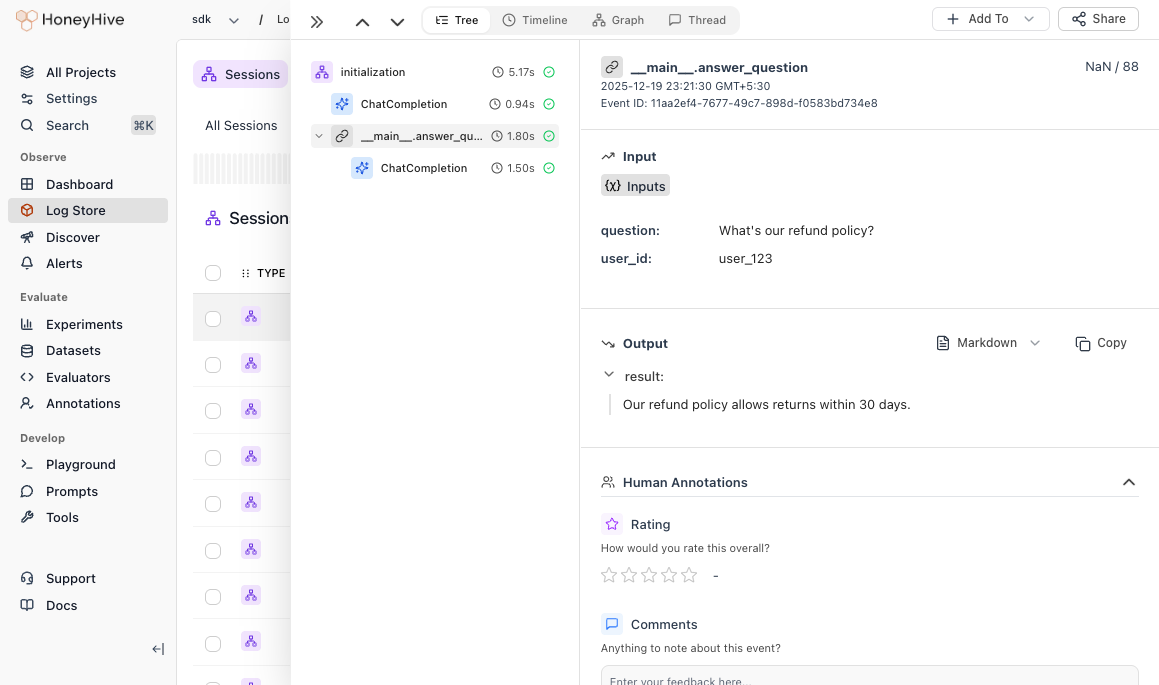

Option 2: Create a Parent Span

When you have multiple steps (retrieval, processing, LLM calls), use@trace to create a parent span that groups them:

When to Use Which

| Use Case | Pattern |

|---|---|

| Tenant, user tier, app version | enrich_session() |

| User ID, feature for LLM calls | using_attributes |

| Pipeline with multiple LLM calls | @trace on the entry point |

Best Practices

DO: Add user IDs, feature names, environment. Use descriptive keys (user_id not uid).

DON’T: Include passwords, API keys, or PII. Keep fields under 1KB.